From Experiment to Production: How Our AI Support Agent Beats Intercom Fin On Every Axis

Marker.io’s CPO Emilio on how and why we ditched Intercom's Fin and built our own AI support agent in a week with Claude Code.

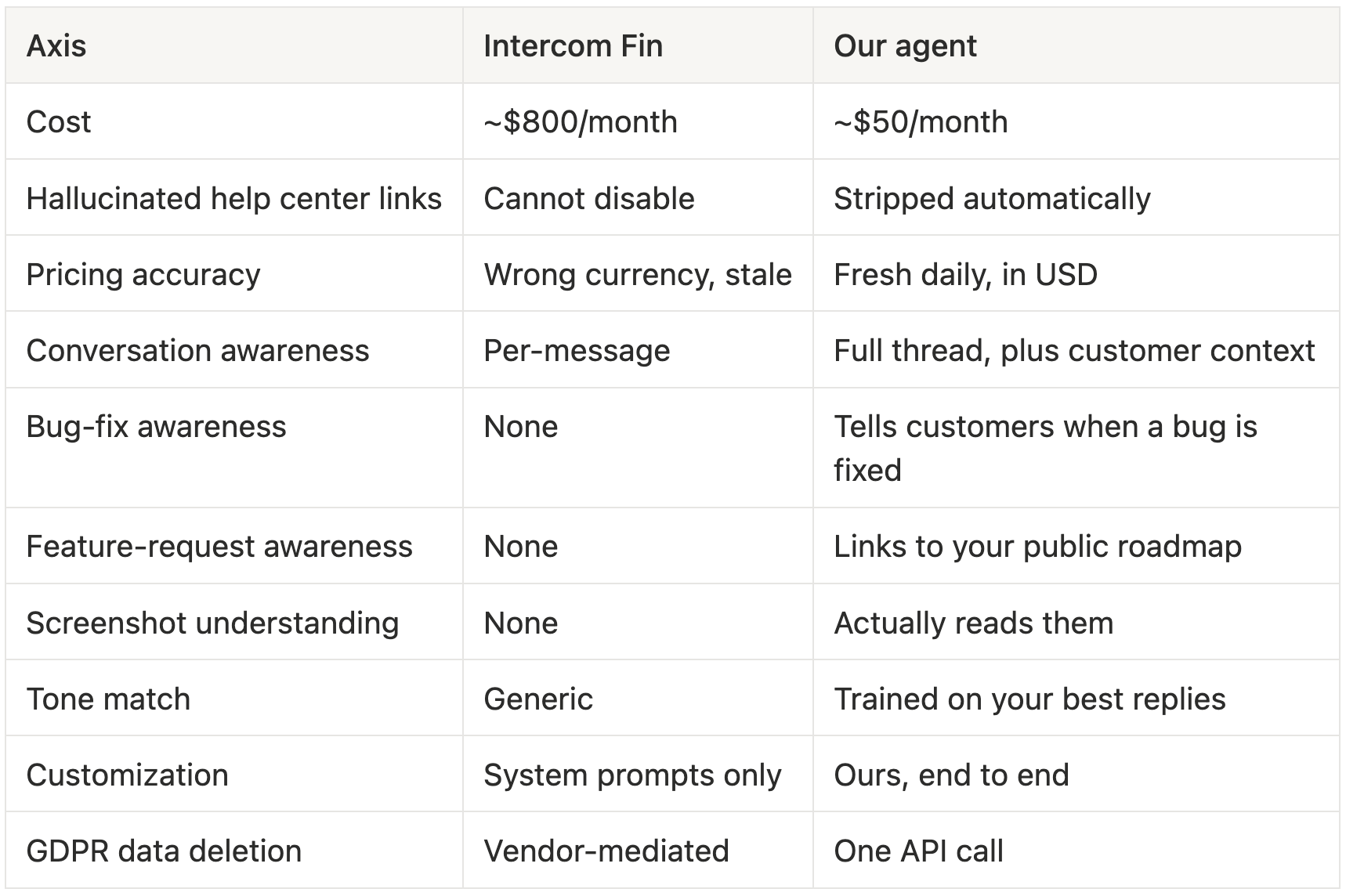

TL;DR: We replaced Intercom Fin with an AI support agent we built ourselves. It started as a one-week experiment. Three months on, it's a production-grade product that handles every customer conversation we get. It costs around $50 a month. Fin would cost us $800. And on every metric that matters (accuracy, tone, integrations, control), ours wins.

Why we left Fin

Two moments pushed us out the door.

A customer asked how to set up webhooks. Fin replied with a link to a help article that didn't exist. The customer clicked it and landed on a 404. We couldn't disable that behavior, edit the reply, or even apologize through the bot.

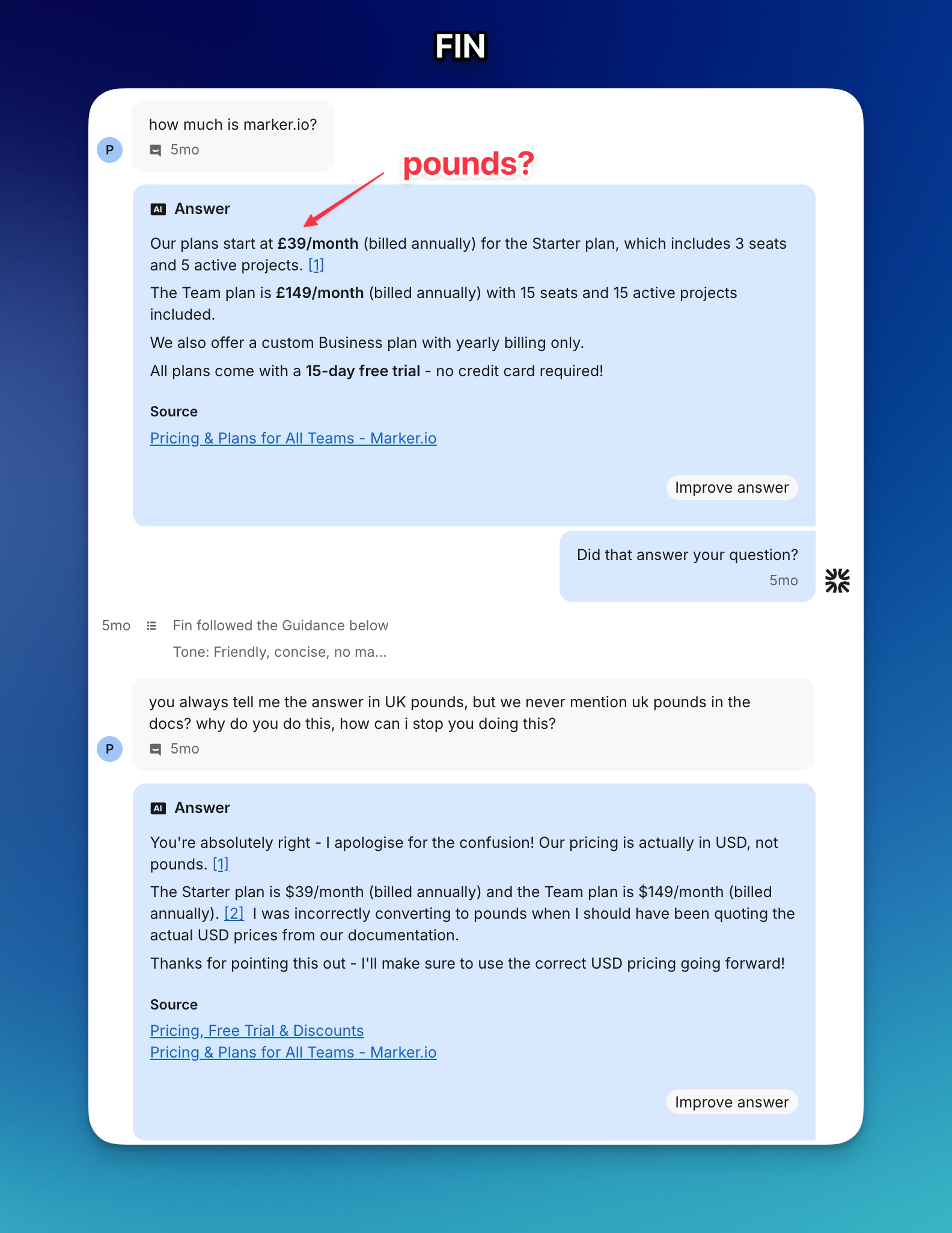

A different customer asked about our Team plan. Fin quoted £89 a month. We sell in USD. Our Team plan isn't £89. For a billing question, a wrong answer is worse than no answer.

Beyond those: Fin treated every message as isolated, didn't know when a bug had been fixed, didn't know which features were on our roadmap, and didn't sound like us. We opened a ticket with Intercom support. Two weeks later, we'd had enough.

What it took to make it production-ready

Three months and 44 deploys later, the first prototype is barely recognizable.

The bot now reads screenshots customers send. It reviews every draft for safety issues before posting. It knows when a customer's bug has been fixed in Linear and tells them. It checks our roadmap on Canny and links to the right feature request. It refreshes pricing daily from our public page, not from cached docs. And every change to how it behaves runs through an automated test suite before it ships.

Here's what changed, in plain terms.

It sees what customers see

When customers send a screenshot, the bot can actually look at it. If they show an error message, it references the exact text. If they share a workflow, it describes what they're doing and answers accordingly. No more "can you describe what you see?"

It knows your bug status

When a customer reports something we've already fixed, the bot says so. When they hit a bug we're tracking, our agents see the open issue as an internal note before they reply. The wiring comes straight from Linear.

It sounds like us

The bot learns from 500 of our best past replies. It picks up how we explain webhooks, how we handle "can you extend my trial," and how we troubleshoot widget setup. Generic chatbot in, our voice out.

Agents can take over in one comment

Agents type @ai stop to silence the bot on a conversation. They type @ai start to re-enable it. They can also say @ai check if they're on the right plan to regenerate the draft with extra context. The bot is a coworker, not an autopilot.

A daily summary lands in Slack

Every morning at 8am we get a categorized digest of yesterday's support conversations. Bugs, feature requests, billing, other. Each one shows whether the bot resolved it or a human jumped in. We didn't have this with Fin.

The unsexy part: making it safe

We didn't want a faster bot. We wanted a bot we could trust on Enterprise conversations.

That meant building a safety pipeline that runs before any reply goes out. The bot can't link to a help article that doesn't exist. It won't get talked into ignoring its instructions through a screenshot. If anything looks off, it posts the draft as an internal note for a human to review and stops auto-replying on that conversation.

We also built an evaluation suite that runs whenever we change how the bot behaves. It generates real drafts against test cases and grades them on accuracy, tone, and policy compliance. If quality drops, the change doesn't ship. That's the unsexy thing that turned an experiment into a real product.

For compliance, we documented every sub-processor publicly, route Claude calls through AWS in the EU for data residency, and built a one-call API that scrubs a customer's data from the bot on request. GDPR-ready from day one of going production.

How it stacks up against Fin

What we learned

A few honest takeaways from getting here.

An MVP is not a product. The first version worked because volume was low and we paid attention. Production needs guardrails, retries, and an actual safety story.

Prompts drift without tests. Adding "rules" to a system prompt works for the first ten. By rule twenty you're silently breaking earlier ones. An eval suite that catches regressions is the difference between a fun experiment and something you can leave running.

The win is control, not just cost. Yes, we save around $750 a month. The bigger win is that when something goes wrong, we fix it in minutes instead of filing a ticket and waiting.

Should you build your own?

If you're a small support team with an opinionated tone, real integrations, and a desire to control how your AI talks to your customers, building your own is no longer a brave call. The hard parts (the model, the retrieval, the prompting) are well-documented. The unsexy parts (safety, evals, staging) are doable in a few weeks of focused effort.

If you're a five-person team without those constraints, Fin is fine.

For us, the answer is settled. Our agent is in production. It handles every customer conversation we get. It's no longer an experiment.

What should I do now?

Here are three ways you can continue your journey towards delivering bug-free websites:

Check out Marker.io and its features in action.

Read Next-Gen QA: How Companies Can Save Up To $125,000 A Year by adopting better bug reporting and resolution practices (no e-mail required).

Follow us on LinkedIn, YouTube, and X (Twitter) for bite-sized insights on all things QA testing, software development, bug resolution, and more.

Get started now

Free 15-day trial • No credit card required • Cancel anytime