User Acceptance Testing (UAT): Meaning, Definition, Process

In this guide, you'll learn what user acceptance testing is, and discover a process to conduct UAT—the right way.

User acceptance testing (UAT) is the final stage of testing before launch, where real users or stakeholders validate that a product works in real-world conditions. It helps teams catch workflow issues, unclear requirements, user experience challenges, and last-minute blockers before they affect customers.

In this guide, you’ll learn what UAT is, who should be involved, and how to run it effectively.

What is user acceptance testing?

User acceptance testing is the final check that a product is ready for the real world.

Instead of focusing on bugs alone, UAT validates whether users can complete real tasks successfully, whether the product meets business requirements, and whether stakeholders are comfortable approving it for launch.

That’s why UAT matters: software can pass QA and still fall short in practice. A workflow might be confusing, an approval step might be missing, or a feature might technically work without meeting the user’s actual needs.

By testing realistic scenarios before release, teams can catch those issues early and launch with more confidence.

What is the purpose of UAT?

The purpose of user acceptance testing is to validate two critical aspects:

- User requirements. Does the app meet user expectations? Is it easy to navigate and use?

- Business requirements. Can the app handle real use cases efficiently and effectively?

In other words, the software should help users accomplish real-world tasks without hurdles.

Validation of these requirements usually comes in the form of stakeholder sign-off or approval (the "acceptance criteria").

For example, when your client is satisfied with the final version of the app or website.

UAT vs QA: what’s the difference?

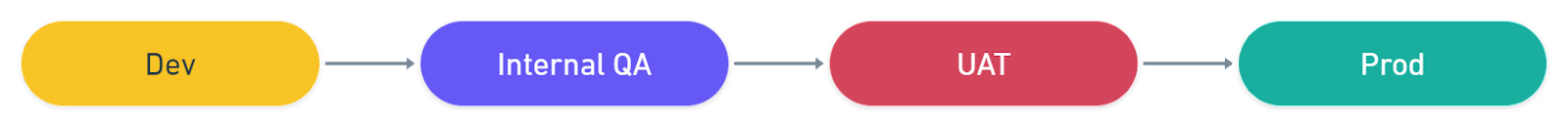

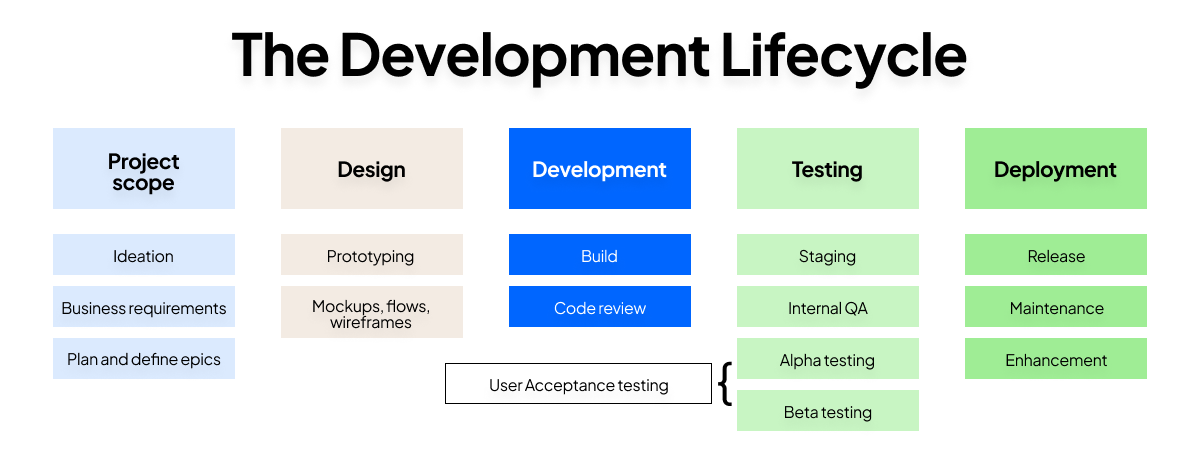

Internal QA focuses on technical issue resolution and is executed by the QA team, whereas user acceptance testing is performed by end-users or stakeholders to ensure the software meets real-world expectations.

Here’s a table outlining the key differences:

Both QA and UAT involve heavy levels of software testing and are performed at the same stage of the software development life cycle, so it's not uncommon to confuse the two. At the end of the day, user acceptance testing is a form of quality assurance.

Here's a diagram of the development lifecycle to visualize QA and UATs position in it.

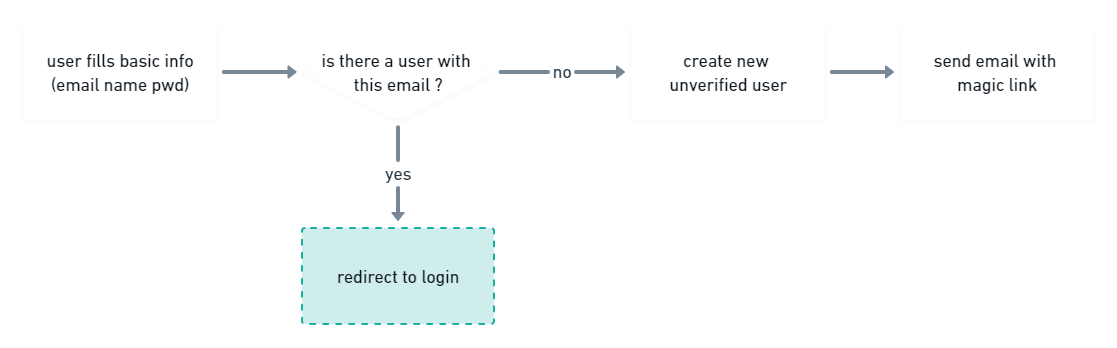

To further understand the difference between the two, let’s take a standard sign-up flow as an example.

Typical test cases in the internal QA phase for a sign-up flow might include:

- Are all input fields and buttons usable?

- Does the email verification function run properly?

- Are there any unexpected bugs at any point in the workflow?

UAT test cases, however, aim to answer user-centric questions such as:

- Are testers filling out the correct information?

- Do they understand what’s happening when being redirected to the login page?

- Are they opening their email and going through the verification steps

Read more in our blog comparing QA vs. UAT.

What are the benefits of user acceptance testing?

There are plenty of benefits to running UAT, primarily that it guarantees the quality of your apps, products, and websites. If you're still on the fence, here are some more reasons you should be running UAT processes:

- Early issue detection. While developers can catch many technical issues during code reviews, user acceptance testing brings the perspective of an end-user to identify potential confusion.

- Fresh perspective. Developers can sometimes miss overarching usability or flow issues. First-time users provide fresh insights. If they find any aspect of the app unintuitive, they'll raise it.

- Real-world environment. UAT offers a production-like setting for testing. By mirroring your production database, you get to observe genuine user interactions and behaviors, rather than just hypothetical or "expected" use scenarios.

- Broad testing scope. With multiple testers, you amplify the chances of spotting even the most obscure issues and bugs.

- Mitigate risks pre-launch. Identify and rectify significant issues, such as confusing user interfaces, security vulnerabilities, or critical bugs, before the software reaches the broader audience. Successful user acceptance testing ensures your app is robust, secure, and user-friendly when it goes live.

- Objective feedback. UAT often involves users who are new to your software and lack any biases. They don’t have prior notions about its functionalities or design, and so their feedback is candid and impartial.

Who performs UAT?

User acceptance testing is performed by the end-users: the people who will actually use, approve, or rely on the product in real-world conditions.

That usually includes end users, clients, business stakeholders, product owners, or subject matter experts. Their role is to validate that the product supports real workflows, meets business requirements, and is ready for release.

The QA team may help organize the process, but they are not usually the people who perform UAT. In most organizations, QA is responsible for preparing the test plan, setting up a UAT environment that closely mirrors production, writing or reviewing test cases, and making sure issues are documented clearly.

In other words, QA facilitates UAT, while users and stakeholders provide the final validation.

Who participates can vary depending on the project. For example, a client may handle sign-off in an agency project, while internal business teams may run UAT for an enterprise rollout. For a website launch, UAT often involves marketers, content and design teams, regional stakeholders, legal reviewers, or anyone responsible for checking that the site works as expected before go-live.

The most important rule is simple: the people performing UAT should be close enough to the real use case to judge whether the product is actually ready.

Types of user acceptance testing

There are several different types of user acceptance testing, depending on what you need to validate before launch.

Some types focus on whether the product works for real users. Others focus on whether it meets operational, contractual, or legal requirements.

The main types of UAT include:

- Alpha testing involves testing the product internally before it is exposed to a wider group of users.

- Beta testing involves releasing the product to a limited group of real users to gather feedback in real-world conditions.

- Contract acceptance testing validates that the software meets pre-agreed specifications and deliverables.

- Operational acceptance testing checks whether the product is ready for day-to-day use, support, maintenance, and handover.

- Regulation acceptance testing verifies compliance with legal, regulatory, or industry-specific requirements.

It’s also worth clearing up a common point of confusion: unit testing, integration testing, system testing, regression testing, and functional testing are not subcategories of UAT. They are separate software testing phases that happen earlier in the software development lifecycle.

Meanwhile, black box testing is not a type of UAT specifically. It’s a testing approach that can be used during different stages of the software testing process, including UAT.

Read more in our article on the types of user acceptance testing explained

In this guide, we’ll focus on two of the most common and practical UAT types: alpha testing and beta testing.

Alpha testing vs. beta testing

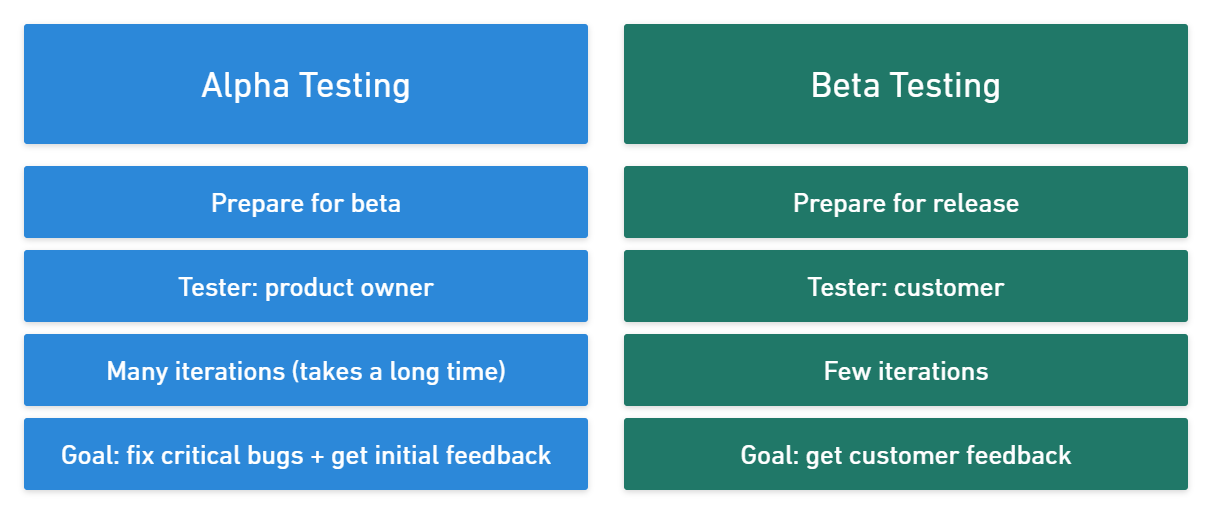

Alpha testing and beta testing are both valuable parts of the UAT process, but they happen at different points and serve different goals.

Alpha testing usually happens first. It helps teams catch major issues before the product reaches real users. Beta testing comes later and focuses more on validating the experience with a limited group of actual users.

A simple way to explain the difference is with a comparison table like this:

Types of user acceptance testing

There are several different types of UAT, based on your objectives:

- Alpha and beta testing aim to fix critical bugs and get early feedback from stakeholders.

- Contract acceptance testing validates that software meets pre-agreed specifications.

- Operational acceptance testing ensures workflows operate smoothly.

- Regulation acceptance testing verifies compliance with laws and regulations.

- Black box testing focuses on software outputs without considering internal mechanics.

- Integration testing, unit testing, system testing, regression testing, and functional testing are all subcategories of UAT.

Read more: 6 Types of User Acceptance Testing Explained

In this guide, our spotlight will be on the paramount user acceptance testing types: alpha and beta testing.

Alpha testing vs. beta testing

Alpha testing and beta testing are two distinct testing phases.

With a combined UAT, website feedback, QA, and bug tracker tool like Marker.io, you can manage UAT testing throughout the entire process.

In alpha testing

- Your testing team is usually internal. That may include QA, product, support, business analysts, or other stakeholders.

- Critical issues may still exist. Broken workflows, missing functionality, and major usability problems can still appear at this stage.

- It is usually more controlled than beta testing. The goal is to test in a safe environment and fix the biggest issues quickly.

- Your main objective is to prepare the product for broader exposure by improving stability, usability, and overall readiness.

In beta testing

- A limited group of real users becomes your testing team. These beta testers should reflect your target audience and intended users as closely as possible.

- The product is usually more stable by this point. The most serious issues should already have been addressed during alpha testing.

- This phase is often shorter and less controlled. The goal is to observe how the software behaves in more realistic conditions.

- Your main objective is to collect feedback, confirm that the product meets user expectations, and validate whether it is ready for the final stage before launch.

One important note: not every team runs both phases formally. Some products move straight into a lighter beta program, while others keep alpha testing entirely internal. But the logic stays the same: alpha testing helps you stabilize the product, and beta testing helps you validate it with real users.

How to conduct user acceptance testing: A step-by-step guide

In this section, we'll delve into a real-world example of UAT when we released our domain join feature.

Let's break down the key steps:

1. Define project scope and acceptance criteria

The first step is to clearly define the project scope and objectives and document them.

Throughout the user acceptance testing process, you should consistently go back to your documentation to verify scope, customer needs, and others.

With this documentation, you can verify at any point that:

- Technical objectives have been met.

- The pain point is gone.

All in all, defining your project scope saves a ton of headaches.

- The whole team is aligned: “This is what we’re trying to achieve, and this is how we’re going to do it.”

- All information is centralized: If there's ever uncertainty about the software’s behavior during testing, you can refer back to this foundational document.

- You don’t go over (or under) scope: Avoiding unplanned features during testing is crucial.

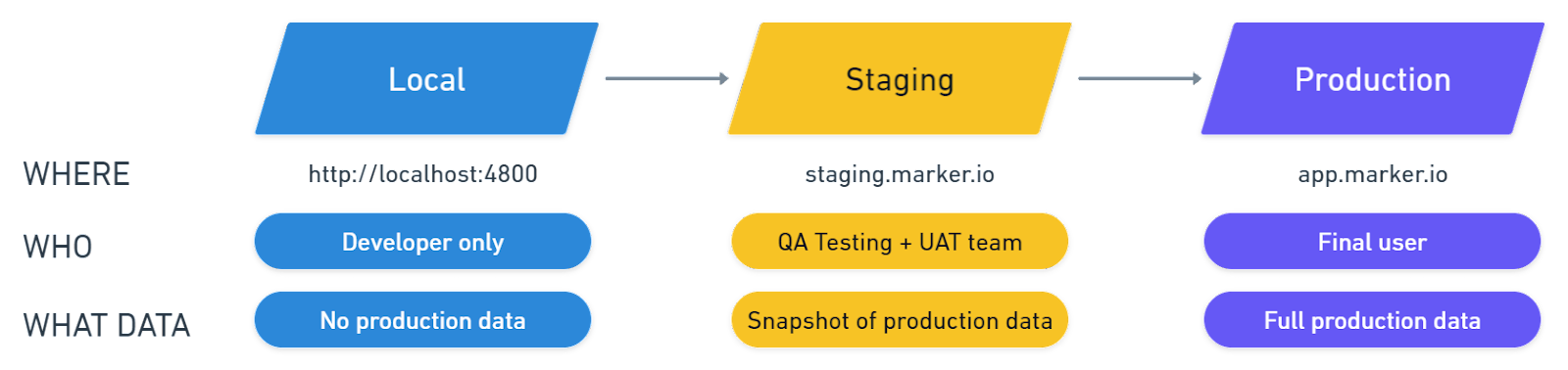

2. Set up a UAT environment

The ideal way to observe real users testing your app would be in production, right?

But you can’t push a new version of your software to prod and “see what happens” just like that... for obvious reasons.

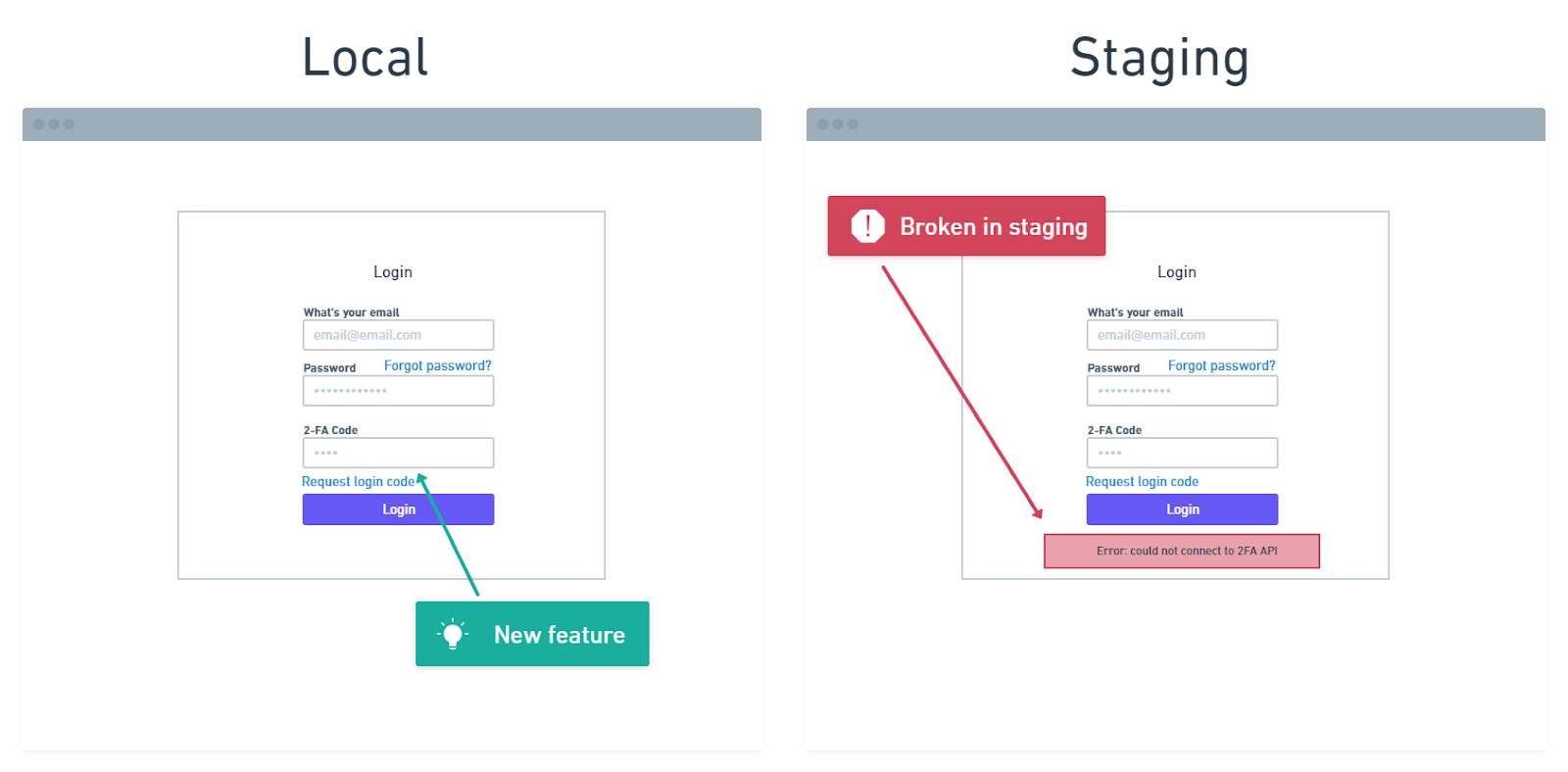

The next best thing is a staging environment. A UAT environment is usually a staging or pre-production environment that closely mirrors production and is used for user acceptance testing.

A staging environment allows you to run tests with production data and against production services, but in a non-production environment.

For example, at Marker.io, we test changes on staging.marker.io, a password-protected version of our app. Stakeholders can then easily log on and start identifying UAT issues.

The same logic applies when you run UAT tests on a larger scale. Send a URL and login credentials to your beta testers (via e-mail or otherwise) and tell them to try and break your app!

There are a couple of other added benefits:

- Save time. When your product is in staging, you can push changes and fixes immediately – it doesn’t matter if the whole app breaks.

- Protect live data. Beta user inadvertently caused a crash? Your production database is safe.

- Better overview. Locally testing your components is great for seeing what they do independently. But it's only through staging that you can truly see how they integrate within the full software.

- Easier testing. Suppose you forgot to update a package in production, or a function working completely fine in local suddenly breaks. These issues will show up in staging already.

For UAT, it is paramount that your staging settings mirror your production settings.

Don’t fall into thinking, “Well, this is just another testing environment. It doesn’t need to be perfect.” Your staging environment should be an (almost) exact copy of production.

With an accurate clone, if something doesn’t work in staging, it won’t work in production either.

3. Pre-organize feedback

You will also need a system to categorize and manage user feedback.

We suggest categorizing feedback into two main categories:

- Feedback that requires discussion. Complex usability issues and the odd challenging bug that’s slipped through. All issues that will require a meeting with several team members.

- Instant action feedback. Wrong color, wrong copy, missing elements – one-man job problems.

4. Select a UAT tool

Quality reporting during the software testing process is tedious.

For every usability issue, you have to:

- Open the screenshot tool, capture the issue.

- Open software to annotate the screenshots, add a few comments.

- Log into a project management tool.

- Create a new issue.

- Document the issue.

- Add technical information.

- Attach a screenshot.

- Share with the right colleague or team

For a seasoned QA expert, this is a walk in the park. But this is a user acceptance testing guide and when you do UAT, you are bound to involve non-technical, novice QA testers – or actual software users.

We’ve found it easiest to install a website feedback widget on our staging site as a UAT solution, like Marker.io.

A UAT tool enables your testing team to report issues and send feedback straight from the website, without constantly alt-tabbing to email or PM tool. It’s faster and more accurate for all parties involved.

On the tester side, it’s a three-steps process:

- Go to staging, find a problem.

- Create a report and input details.

- Click on “Create issue” – done!

5. Create scenarios and test cases

In user acceptance testing, test scenarios and test cases should reflect real business processes, user requirements, and real world scenarios. By this point in the software development lifecycle, earlier testing phases like unit testing, integration testing, system testing, and functional testing should already be complete. The goal of UAT is different: to confirm the software meets business objectives and user expectations.

Start with broad user scenarios, then turn them into specific UAT test cases.

For example, a website UAT testing process might include scenarios like:

- A first-time visitor signs up for an account

- A returning user resets their password and logs back in

- A customer completes a checkout flow

- A marketer publishes a new landing page in the CMS

- A regional stakeholder reviews localized content

- A legal reviewer checks cookie consent and policy links before launch

Each acceptance test should connect back to a requirement, user story, or workflow in your UAT test plan. Include the goal, steps, expected result, actual result, and any test data needed.

Good test data matters. If your testing environment uses unrealistic content or permissions, your test results will be less useful. The closer your separate testing environment is to the production environment, the more accurate your UAT testing process will be.

You may also need broader checks like operational acceptance testing, contract acceptance testing, or regulation acceptance testing, depending on the release.

6. Recruit, onboard, and brief testers

The people who perform UAT should represent the product’s real users.

That usually means end users, clients, business stakeholders, subject matter experts, or business analysts who understand the product’s workflows and business requirements. The right UAT team depends on the project, but the goal is always the same: involve people close enough to the real use case to judge whether the software meets user needs.

For a website launch, that might include marketers, content editors, legal reviewers, regional teams, or support leads. Some teams also use alpha testing internally first, then expand into beta testing with customers or beta testers who reflect the target audience.

Once you’ve selected testers, onboard and brief them properly. Share the test plan, timeline, defined acceptance criteria, login details for the UAT environment, required test data, and instructions on how to provide feedback.

Some testers should follow structured test cases. Others should have room to explore naturally. That balance usually leads to better end-user testing and more useful feedback.

7. Run tests and capture feedback

This is the point where UAT execution begins.

Ask testers to work through the defined scenarios in realistic conditions, using realistic data. The aim is not just to check whether the software works, but whether it supports real users, real workflows, and the needs of the final release.

During UAT testing, encourage testers to flag anything that blocks a task, creates confusion, breaks content or journeys, or falls short of user expectations. That includes usability problems, broken links, missing content, inconsistent messaging, and cross-browser issues.

To keep the testing process efficient, every report should include:

- What was tested

- The steps taken

- The expected result

- The actual result

- Screenshots or video where needed

- Browser, device, and environment details

Many UAT tools like Marker.io offer session replay as standard, while browser, device, and environment details are shared automatically with issues

Clear reporting helps the development team and quality assurance team act faster. It also makes the software testing process easier to manage across the full UAT cycle.

The goal is not to collect the most tickets, it’s to gather actionable feedback that shows whether the software meets business requirements and is ready for production.

8. Triage defects, retest, and sign off

Once feedback comes in, the next step is defect management.

Not every issue found during user acceptance testing UAT has the same impact. Some defects block release. Others can wait until later. A strong UAT process means triaging issues based on severity, business impact, and whether they break the agreed acceptance criteria.

Most teams group findings into blockers, high priority, medium priority, and post-launch improvements. From there, the development team fixes the most important issues, and the UAT team coordinates retesting.

Retesting matters. A fix is only complete once the original scenario has been tested again and the team confirms the software behaves as expected in the UAT phase.

Sign-off should happen only when key business objectives and user requirements have been validated, critical defects have been resolved or accepted, and stakeholders are confident in the release.

A successful UAT does not mean finding zero issues. It means the team understands the remaining risk, confirms operational readiness, and completes the final quality check before go-live.

9. Document everything for future UAT requirements

Following your UAT process, do a retro to understand exactly what worked and what didn’t.

Record those learnings with the other UAT documentation you’ve created so that you can come back to it for future user acceptance testing work.

User acceptance testing best practices

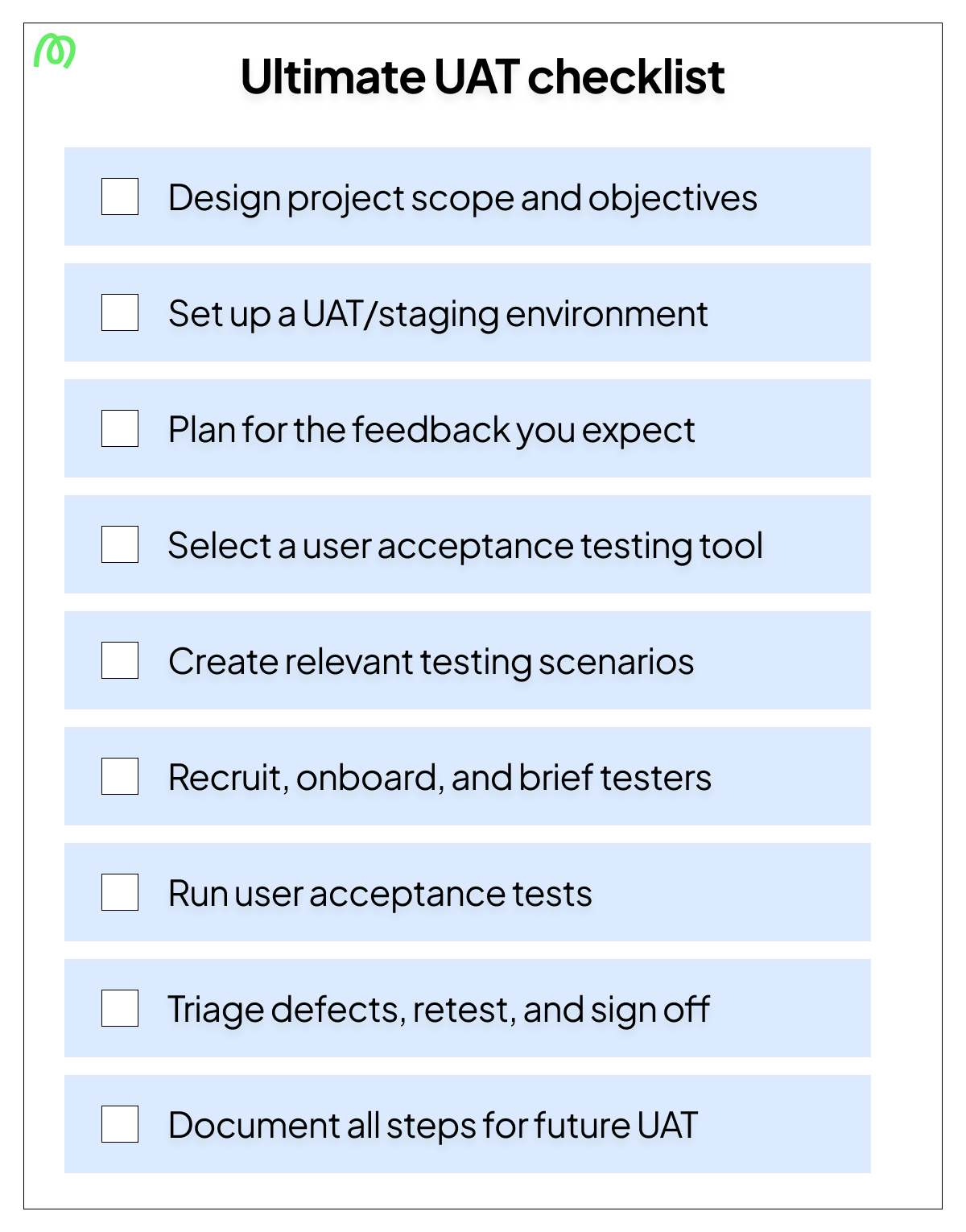

This checklist is a recap of everything we’ve discussed in this post and contains all the best practices for user acceptance testing.

- Design project scope and objectives. Have a clear plan for the new feature or software that you are releasing. During UAT, come back to this document to ensure pain points have been properly addressed.

- Set up a UAT environment. Run tests in a safe environment. Staging is a perfect copy of your production, the ideal playground for alpha and beta testers.

- Plan for the feedback you want. Categorize expected feedback into issues that can be handled quickly, by one person, and those that require more consideration.

- Select a user acceptance testing tool. Find a solution that enables end-users to simply and intuitively record issues and share them with your team.

- Create relevant scenarios. Identify what broad scenarios users may encounter that you can then run through during the UAT process.

- Recruit and brief testers. In alpha testing, tell testers about the new feature you’ve built in detail. Make it clear what the business objective is, and what you expect to discover from this testing.

- Run user acceptance tests. Have testers run through the scenarios you’ve defined in realistic conditions, so you can capture issues that could occur in a real-world environment. Encourage testers to flag anything that they see as an issue.

- Triage defects, retest, and sign off. This stage is the purpose of the entire UAT process: Actually fixing what doesn’t work.

- Document all steps. Keep tabs on what was fixed, what needs working on, expected challenges in the next iteration, and how the process can be improved.

Great user acceptance testing starts with great organization, so here’s a downloadable version of the best practices checklist you can keep at hand for your next user acceptance testing project.

User acceptance testing checklist

Here's a simple checklist you can download and use to make sure you have completed every important UAT step.

Visit our dedicated blog for a more complete user acceptance testing checklist. Alternatively, you can use one of our user acceptance templates to start your own UAT planning. You can download the full test plan template in this Google Doc.

User acceptance testing examples for websites

Website UAT follows the same core principles as other forms of software testing, but the focus is often on cross-functional workflows, content, and user journeys.

Here are a few common user acceptance testing examples for websites:

1. Sign-up and login flows

Ask testers to create an account, verify their email, log in, and reset their password. This helps confirm that a core journey works smoothly for real users in real-world conditions.

2. Lead generation forms

Have testers complete contact, demo, or download forms using realistic test data, and then check that submissions work, confirmations are accurate, and the right team receives the lead. This is a common acceptance test for B2B websites.

3. CMS publishing workflows

Ask an editor to create, review, and publish a page. This helps validate permissions, approvals, and internal business processes, not just front-end output.

4. Checkout or conversion journeys

For e-commerce or SaaS websites, test the full path from browsing to checkout or signup. These UAT test cases are critical because small issues can affect revenue and user satisfaction.

5. Localization and compliance checks

If your site serves multiple markets, include localized content, regional forms, consent flows, and policy messaging. This may overlap with regulation acceptance testing or contract acceptance testing.

6. Launch readiness

Some scenarios are about more than visible bugs. Redirects, analytics, routing, and support handoffs all affect launch success. This is where website UAT can overlap with operational acceptance testing.

In short, website UAT works best when it tests complete workflows, not isolated pages. The goal is to confirm that the software meets user needs, supports intended users, and is ready for the final phase before launch.

Common UAT challenges

When conducting user acceptance testing, companies can run into the following challenges:

- Poor UAT documentation. A lack of scope, undefined objectives, and no UAT testing plan will cause issues during testing. Getting your entire team (and testers) aligned is crucial for success with UAT.

- Not enough extensive internal QA. Development teams can save themselves a lot of admin time by getting rid of several bugs upstream, rather than leaving it for the user acceptance testing phase. Ideally, UAT testers should operate in a nearly bug-free environment.

- Lack of a strong testing team. Pre-screen your testers and ensure they are the right target audience for your software. Train them in the different tools and processes you use for testing, and align them with your goals.

- Not using the right tools. For large-scale projects in particular, asking your testers to use Excel, Google Docs, or emails for their reports is a recipe for disaster. Prepare solid bug tracking and reporting solutions to make it easier for everyone involved.

When to use UAT testing services

This article outlines how you can start running your own user acceptance testing with a tool like Marker.io. However, for many businesses, running UAT internally might be challenging – especially for business users without an efficient testing strategy in place.

UAT testing services can help in this situation. A tool gives you the system; a service gives you the people, process, and capacity to run that system properly.

Use a UAT tool when you already have a clear testing strategy, available testers, and someone internally who can manage the process. Marker.io helps you replace manual UAT processes with a cleaner workflow: business users can report issues directly from the website, developers receive the right context, and feedback doesn’t get lost in email, spreadsheets, or screenshots.

That’s often enough for smaller launches or mature teams with established test management tools.

It’s worth considering a UAT service when your project is bigger, riskier, or more complex than your team can handle efficiently.

For example, you may want help from a specialist agency when:

- You’re launching a large website, app, or software product across multiple markets.

- Your internal team doesn’t have time to recruit, brief, and manage testers.

- You need neutral feedback from people who weren’t involved in the build – finding users can be a challenge in of itself.

- You have complex business needs, compliance checks, localization requirements, or approval workflows.

- Your business users are already stretched with their day-to-day work.

UAT is not the same as automated testing.

Automated testing can tell you whether a button works, a form submits, or a page loads correctly. UAT checks whether the experience makes sense for real people trying to complete real tasks. It’s where you find the messy, human issues that affect customer experience: confusing copy, unclear navigation, broken handoffs, missing content, or workflows that don’t match how the business actually operates.

In large organizations, UAT is typically performed by a mix of internal stakeholders, subject matter experts, and end users. Coordinating those people is often the hard part.

A user acceptance testing service or agency brings structure to the process by defining scenarios, briefing testers, managing feedback, prioritizing issues, and helping your team reach sign-off faster.

You can even choose an agency that already uses your preferred UAT tool. The feedback process then stays consistent, whether it’s handled externally or brought in-house. Your agency, internal teams, and developers all work from the same reporting flow.

In short: use a tool when you have the team to run UAT yourself. Use a service when you need extra testing capacity, process maturity, or independent validation.

Wrapping up...

UAT’s meaning, in short: User acceptance testing gauges how well your app or website meets customer needs and business requirements.

To conduct effective UAT, ensure you’re collecting that information in the best possible way. In other words, have a system.

The resources and ideas shared in this post are your guide towards building that system, with guidance to help you put together your next user acceptance testing session.

Frequently asked questions

When does UAT happen in the software development lifecycle?

User acceptance testing happens near the end of the software development lifecycle (SDLC), after the product has already passed the main internal software testing stages.

In a typical SDLC, teams move through requirements, design, development, and then several testing phases such as unit testing, integration testing, system testing, and quality assurance. Once those checks are complete and the product is stable enough to validate in real-world conditions, the UAT phase begins.

That makes UAT one of the last steps before launch.

How to build the right UAT team?

The right user acceptance testing team will consist of:

- A project manager, in charge of overseeing UAT execution from start to finish.

- A documentation specialist who will define scope and objectives, create a user acceptance testing test plan, and help draft test cases.

- A QA lead in charge of pre-screening, onboarding, and training testers.

- A set of testers, ideally existing users or customers, but also business stakeholders.

Can you automate user acceptance testing?

To an extent, yes. Instead of manual test scenarios, simply push releases to your test environment.

Then, run a couple of automated test cases that’ll test the functionality of your app.

However, we recommend having actual software users test your website. At the end of the day, this is the only way to make sure your users are satisfied with the app.

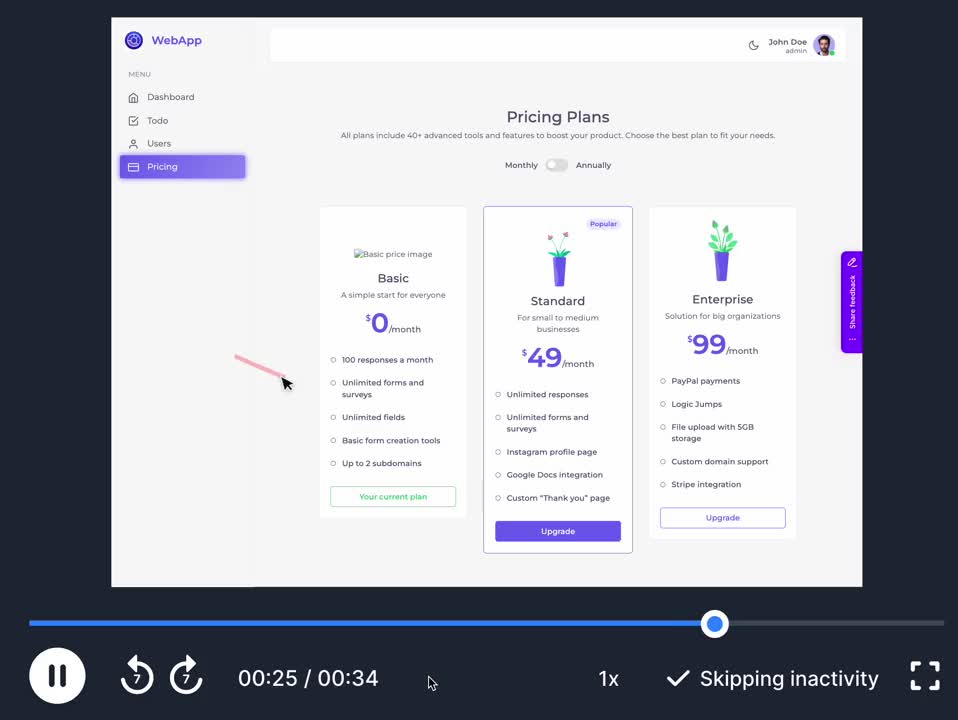

What is a user acceptance test? (with examples)

A user acceptance test is a type of testing run during UAT execution.

The goal is to find out if the end user has an easy time completing the test and if they run into any issues.

Here’s a user acceptance testing example:

- Sign up for the tool and upgrade to our “Standard” plan.

- Try to create a project and add a couple of tasks to this project.

- Attach a file to task #3.

- Invite your team to join you.

Who writes UAT test cases?

The QA team is usually in charge of test management and writing test cases.

They manage the entire process:

- Writing test scenarios that satisfy business requirements

- Setting up a staging environment

- Onboarding testers for user acceptance testing

- Analyzing results of test cases

What are some tools that help successfully perform UAT?

There are many tools that help assist your user acceptance testing process.

We recommend having:

- An error monitoring system like Sentry

- A user acceptance testing solution like Marker.io

- A test case management system or bug tracking tool like Jira, Trello, etc.

Looking for more UAT Tools? Check out our list of the best user acceptance testing tools out there – and get even more insights from your users.

What should I do now?

Here are three ways you can continue your journey towards delivering bug-free websites:

Check out Marker.io and its features in action.

Read Next-Gen QA: How Companies Can Save Up To $125,000 A Year by adopting better bug reporting and resolution practices (no e-mail required).

Follow us on LinkedIn, YouTube, and X (Twitter) for bite-sized insights on all things QA testing, software development, bug resolution, and more.

Get started now

Free 15-day trial • No credit card required • Cancel anytime